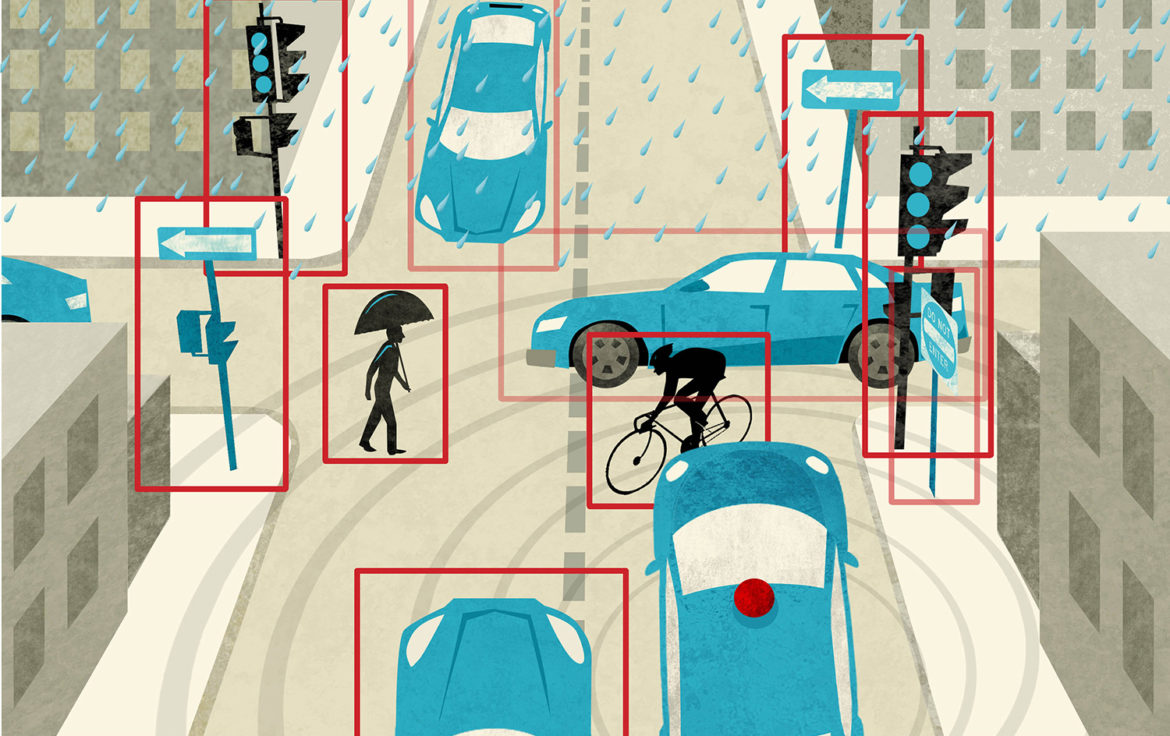

Self-driving cars, without humans in the driver’s seat, are being

tested on the streets of some cities. I used to joke with my nieces that once

they were driving I would be turning in my driver’s license. Now, I am not sure

whether I should be filled with awe at this amazing autonomous technology or

fearing for my life. There are still many questions about how robots will make

critical choices (see Robotic Laws).

The tech companies readily admit that putting autonomous cars on the streets is a form of beta-testing and that there will

be accidents involving cars without human drivers. However, the rationale for

putting autonomous vehicles on the streets is that, ultimately, robot cars will

lead to fewer accidents and fewer traffic fatalities on our roads. This is the

argument in an article in Science News: “When it comes to self-driving cars, what’s safe enough?” by Maria Temming, November 21, 2017.

tested on the streets of some cities. I used to joke with my nieces that once

they were driving I would be turning in my driver’s license. Now, I am not sure

whether I should be filled with awe at this amazing autonomous technology or

fearing for my life. There are still many questions about how robots will make

critical choices (see Robotic Laws).

The tech companies readily admit that putting autonomous cars on the streets is a form of beta-testing and that there will

be accidents involving cars without human drivers. However, the rationale for

putting autonomous vehicles on the streets is that, ultimately, robot cars will

lead to fewer accidents and fewer traffic fatalities on our roads. This is the

argument in an article in Science News: “When it comes to self-driving cars, what’s safe enough?” by Maria Temming, November 21, 2017.

What kind of backlash against these autonomous cars can we

expect when an autonomous car collides with a car piloted by a private citizen

or professional driver? Temming gives us a possible scenario and asks, “What

happens when a 4-year-old in the back of a car that’s operated by her mother

gets killed by an autonomous car?” This is a real possibility. Will the public be quick to blame the robot or the human? How much will driving conditions factor into the investigation? How will Canadian winters affect autonomous cars? The title of

the article is indeed the pertinent question, “What’s safe enough?”

expect when an autonomous car collides with a car piloted by a private citizen

or professional driver? Temming gives us a possible scenario and asks, “What

happens when a 4-year-old in the back of a car that’s operated by her mother

gets killed by an autonomous car?” This is a real possibility. Will the public be quick to blame the robot or the human? How much will driving conditions factor into the investigation? How will Canadian winters affect autonomous cars? The title of

the article is indeed the pertinent question, “What’s safe enough?”